Content Moderation Trilemma of the Social Internet

And why Mastodon is a dead end?

When I first use the internet in 2000, people believed in a free and open internet: a place where anyone can say anything, and anyone who wants to hear it can listen. We were optimistic about an internet that would allow anyone to express their beliefs “without fear of being coerced into silence or conformity”. The free flow of information and hyper-connectedness of internet were supposed to promote mutual understanding of mankind and positive change.

However, two decades later, the egalitarian vision of free speech heaven has failed to materialized. Instead, we have widespread online harassment, misinformation that causes real harm, and violent and hateful content that no one should have to witness. Rather than enhancing mutual understanding, free speech has led to increasing political polarization.

Content moderation has often been an afterthought in today’s internet. Every major internet platforms today started with very few rules. As these platforms grow bigger and people began to do crazy, unpredictable and hateful things, rules and moderation are gradually implemented. There are still online spaces that adhere to the anything-goes mentality, such as 4chan, but they often turned ugly where majority of people choose not to participate. In some sense, content moderation helped make the internet more attractive and functional for most people. It’s already part of the internet.

Moderators are human, and as such, they bring their own perspectives, biases, and beliefs to the job. Without question, content that is blatantly illegal, such as child pornography, should be removed. However, in cases where the line between free speech and harm is less clear, content moderation becomes intricate. For some, certain political viewpoints are harmful and therefore should be censored. For others, all opinions, even controversial ones, should be allowed to circulate freely.

The real question, then, is not whether content moderation is censorship, but rather how it can be done in a way that serves us and the society. What speech should be restricted as harmful? By whom? In what way?

The Moderator’s Trilemma

The difficulties in content moderation is captured by the Moderators’ Trilemma. The trilemma goes:

Running a platform with a large and diverse user base

Attempting to use a centralized, top-down content moderation policies

Avoid angering their users

The past decade of content moderation controversies suggests that these three goals can’t all be met.

Large walled gardens like Facebook, Twitter, and others are certainly unwilling to shrink their user growth and give up control over content moderation. The thesis is that dissatisfaction with moderation decisions on these centralized platforms is inevitable.

Fediverse, including Mastodon, is presented as a solution to the Moderator’s Trilemma. Here is the idea: de-siloing social media, giving up on centralized moderation, devolves content moderation decisions to the individual instances that make up the overall network. Under this model, if users are dissatisfied with a instance’s policy, they can freely switch instances and migrate their account data, including their followers and post history. Unlike the traditional platforms that rely on high switching costs to keep users locked within their walled garden, Fediverse gives it users the freedom of exit. And this freedom in turn enhances users’ power to influencing instances’ policy. This concept is also referred to as content-moderation subsidiarity.

The growing pains of content moderation in Mastodon

The idea of devolving content moderation is seemingly compelling, but its implementation in Fediverse, especially Mastodon, is far from ideal.

Fediverse instances are typically run by volunteers, leading to a lack of dedicated resources for determining and enforcing uniform content moderation standards. As a result, moderation decisions within the Fediverse can be arbitrary and ad-hoc, a problem that is amplified as instances grow in size.

An illustrative example is the controversy at journa.host. Journa.host is a Mastodon instance founded by Adam Davidson, a journalist who worked at The New York Times and The New Yorker. Users are required to prove that they are professional journalists to join the instance. Mike Pesca was a member of the instance, who hosts the popular news podcast “The Gist.” He posted a link to a New York Times story about health concerns associated with the puberty-blocking drugs sometimes prescribed to transgender youths, wrote, “This seemed like careful, thorough reporting.” Parker Molloy, another journa.host user and herself a transgender woman, responded strongly and calling Pesca anti-trans bigotry. Pesca defended his original post and criticized Molloy's actions, wrote, "you as a member of the activist community attack the article of bad bedfellows, insult me and mischaracterize the story as Republicans Versus Doctors.”

Journa.host suspended Pesca for referring to Molloy as an “activist,” which was dismissive. The site also suspended Molloy for "attacking the integrity of another, who is a moderator on the server.” Despite that, journa.host’s decisions has been criticized from all sides. Some believe that journa.host should not have suspended Pesca for his comparatively restrained response to Molloy's attack. Others criticized journa.host for suspending Molloy and for not doing more to fight transphobia.

Journa.host gained a reputation for transphobia to the extent that several large instances have either restricted or banned access for their users to journa.host, a practice in Fediverse called defederation.

The controversy exhibits a dominant culture of Mastodon is supportive of heavy moderation, especially of users and content that is viewed as conservative.

If users were given the freedom to exit, overzealously censorious instances should not be a problem. Empowering user choice of moderation policy is the premise of content-moderation subsidiarity after all. In reality, however, moving an account in Mastodon is not as easy as some proponents suggested. User handles in Fediverse are like email addresses (such as @kcchu@mastodon.social). The hostname of the server is part of the handle, which means that migrating to another Mastodon instance requires changing the user handle and renders links to old profile and posts obsolete. While Mastodon allows users to setup redirection from their old profile to new profile, relocating data of old posts is not currently possible. Also, redirection may not be an option if the old instance no longer exists (such as the owner shutting it down) or the old account is suspended. This can result in users having to start over, losing their previous content and followers.

Defederation results in balkanization

The practice of defederation in the Fediverse is also problematic. Any Mastodon server admins can decide to cut ties with another instance entirely, rendering posts from the blocked instance unavailable for its users and preventing users of the blocked instance from commenting, replying or direct messaging their users.

Defederation is common among Mastodon instances. While the idea of content-moderation subsidiarity advocates for individual instances to make their own content moderation decisions, in practice, it is not feasible for volunteering moderators of an instance to oversee content on all federated instances. It follows that when another connected instance has lenient content moderation standards, many admins choose to defederate the entire instance. It treats all users of the defederated instances as violators, even if only a few users are causing controversy, like what occurred with journa.host. Even largest Mastodon instances are not immune to this. mastodon.social, run by Mastodon’s creator Eugen Rochko, is also facing defederation by many smaller instances.

Defederation as a last-ditch nuclear option to block instances because of spam or abuse is fine. But when the blocklist becomes a protest to other instances’ moderation policy or capacity, endless infighting is warranted. Instances are tempted to change the blocklist into an allow-list system because it is easier to manage. This situation turned Mastodon into balkanized networks of polarizing echo chambers, forcing instance admins to either adopt the strictest content moderation policy or risk being isolated from most of the Mastodon users. The outcome is a spiral of censorship, silencing even slight deviations from the dominating opinion. It makes it impossible to honestly discuss controversial topics from different perspectives without causing a controversy.

While some may prefer to interact only with like-minded people, others are discouraged by this culture. Due to the significant power that instance admins hold over their users, selecting which instance to join can be a major hurdle for first-time users. These problems diminish the platform's potential as a social internet that replaces Twitter.

Freedom to speech, and freedom to filter

Some may say that silencing misinformation, racist, sexist, homophobic, and transphobic speech in the network is a feature, not a bug. They argue that these bad speech has no social value and seriously harmful to the people who are its targets as well as to society at large, and thus should be removed from the entire network (or even society as a whole).

While this essay is not intended to be a philosophical discussion on freedom of speech, I invite readers to read the classic argument from Chapter 2 of John Stuart Mill’s essay On Liberty. Mill argued that when we engage others in defending our opinions it deepens our understanding of our beliefs and prevents them from slipping into dogmas. (Further reading: Jason Stanley's criticism of Mill, and the critic's critics)

We are dealing here with bad speech, not incitement of immediate violence, which is already prohibited under law. The limitation of freedom of speech to prevent direct and immediate harm to others, such as the spread of false claims about COVID-19 vaccines during a global emergency of pandemic, can well be justified under Mill's principle of harm. However, such restrictions should be exceptional and must be necessary and proportionate to achieve legitimate objectives.

More importantly, “free speech is not the same as free reach,” as Reneé DiResta suggested. (No, it is not originated from Elon Musk.) Exercising the freedom of speech does not mean that everyone has to listen.

Today’s internet is mediated by algorithms. The job of the algorithm is to surface relevant content and predict what we want to see next. Algorithms are an important tool to help us make sense of the overwhelming amount of information online. It also shapes our attention by controlling our news feeds and recommendations. Unlike free speech, there is no right to algorithmic amplification.

Perhaps we cannot prevent jerks from using the social internet, but we surely can build a system where we don’t have to hear them.

But then the question is who can and should have the power to select what a user hears. There are rightly concerns about bias in these algorithms and their lack of transparency. And I argue that the key to this problem is user choice, or the “freedom to filter.”

The social internet should empower users to choose which method or algorithms for filtering. Users could delegate control of their feed to one or a mix of recommendation services run by communities, commercial providers, or other institutions. We should establish a marketplace of algorithm that offers diversity, transparency and accountability.

A step forward

The foundation of a true open social internet rely on several key technological capabilities:

Account portability: Maintains stable identifiers for users, regardless of the service provider

Authenticated and content-addressable storage: Enables the seamless migration of user data to a new location

Algorithmic control: User choice over what to hear, assisted by technology

Two projects are currently in the race of this category: AT Protocol (Bluesky) and Farcaster. A comparison of these two protocol could be found here.

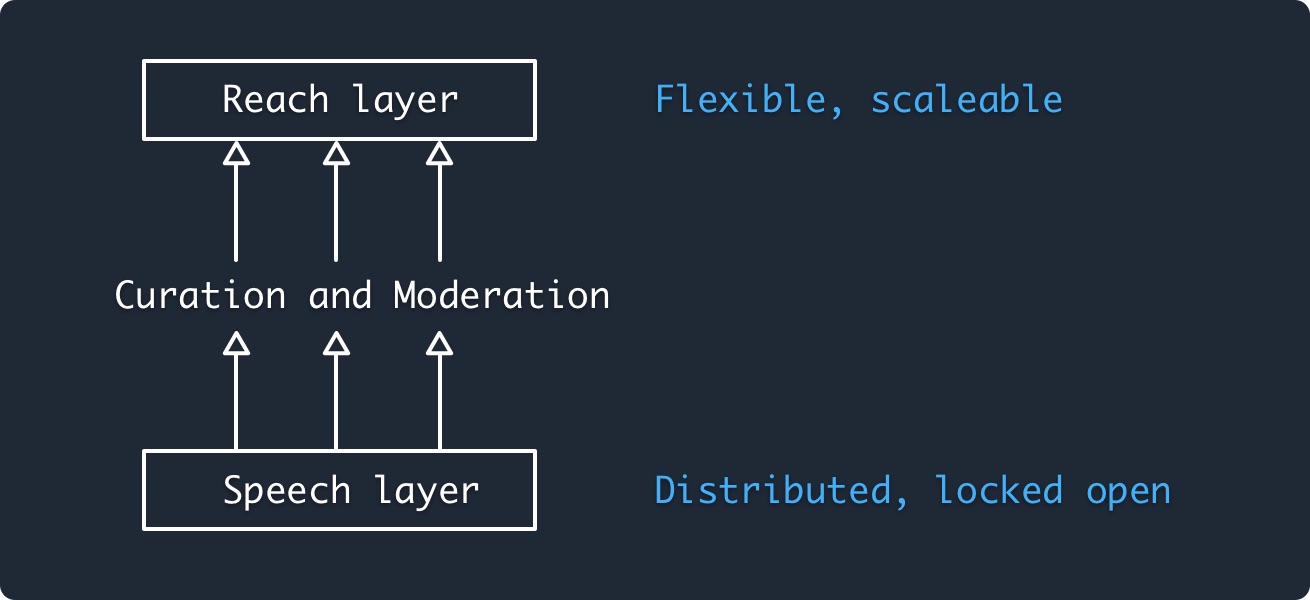

One notable feature of AT Protocol is its integration the idea of “Freedom of speech, but not freedom of reach” within its protocol architecture. The network is separated into the “speech" and "reach" layers. The "speech" layer is intended to remain neutral, distributing authority and ensuring everyone has a voice, while the "reach" layer is built for flexibility and scalability. The base layer of AT Protocol, consisting of Personal Data Repositories and Federated Networking, creates a common space for speech where everyone is free to participate, similar to the Web where anyone can put up a website. Indexing services then aggregate content from the network, allowing for reach and searchability, similar to a search engine.

On top of the separation of layers, AT Protocol, particularly the Bluesky client, plans to support an ecosystem of third-party providers for recommendation algorithm and content moderation. They are developing a composable labeling system, enabling anyone to define and apply "labels" to content or accounts (such as "spam" or "nsfw"). Any service or person in the network can then choose how these labels are used to determine the final user experience.

The issue of free speech versus safety is a false dichotomy. Both are essential components of a healthy and inclusive social internet. While it is crucial to ensure a safe online environment, it must not come at the expense of limiting free expression. By devolving content moderation decisions to individual instances and providing users with the freedom to choose, we can achieve a balance between free speech and safety. At its core, respecting the right of user choice is an essential step toward building a social internet that works for everyone in this world.