Line Segment Notations

Visual thinking, manual drawings and blockchain based generative art

This article is about an ongoing artistic research that focuses on the visual language of lines and notation systems, covering concepts from theoretical views through manual sketching and blockchain based generative media. After a brief overview, two examples called Autocolliders and Less Lines will be introduced as recent phases in the progress.

Thinking through lines

In indigenous cultures, abstract line drawings often serve as a means of preserving memories and cultural knowledge. These drawings can range from simple geometric patterns to more complex, symbolic representations, and are often used in various cultural contexts such as storytelling, rituals and record-keeping. These drawings can also provide valuable insights into the history and beliefs of these cultures, and are considered an important part of their cultural heritage.

Artist and Bauhaus teacher Paul Klee described the first category of line within his Pedagogical Sketchbook of 1925 as

An active line on a walk, moving freely, without goal. A walk for a walk’s sake. The mobility agent is a point, shifting its position forward. […] The limitation of the eye is its inability to see even a small surface equally sharp at all points. The eye must “graze” over the surface, grasping sharply portion after portion, to convey them, to the brain which collects and stores the impressions. The eye travels along the paths cut out for it in the work.

Klee’s allegorical exploration of drawing theory is one deeply rooted within nature — of which humans are very much part of, rather than competing with. His understanding of both making and receiving drawings is built through relations to natural forms and animal instincts. As contemporary psychological research indicates, it has also been shown that infants, stone-age tribe members, and even chimpanzees are able to recognize line drawings. We even see line representation used by insects in bio-mimicry. These findings rule out any strong version of culture-based acquisition for understanding line drawings, although clearly there are many culturally based conventions used in line drawings.

Apart of using lines as hand drawn representations, early computer graphics also worked with lines using math and algorithms to draw pictures with plotters and printers and later, visual displays and computer screens. Some common ones were used for things like making sure lines were drawn in the right order, or making sure parts of pictures were hidden when they should be: usually named after their creators (Sutherland-Hodgman, Bresenham, etc), sorting, intersection, line drawing and other algorithms were introduced to perform operations such as line clipping, visibility determination, and rendering of images. These algorithms were crucial for the development of early computer graphics, and continue to be used in modern computer graphics systems.

Autocolliders

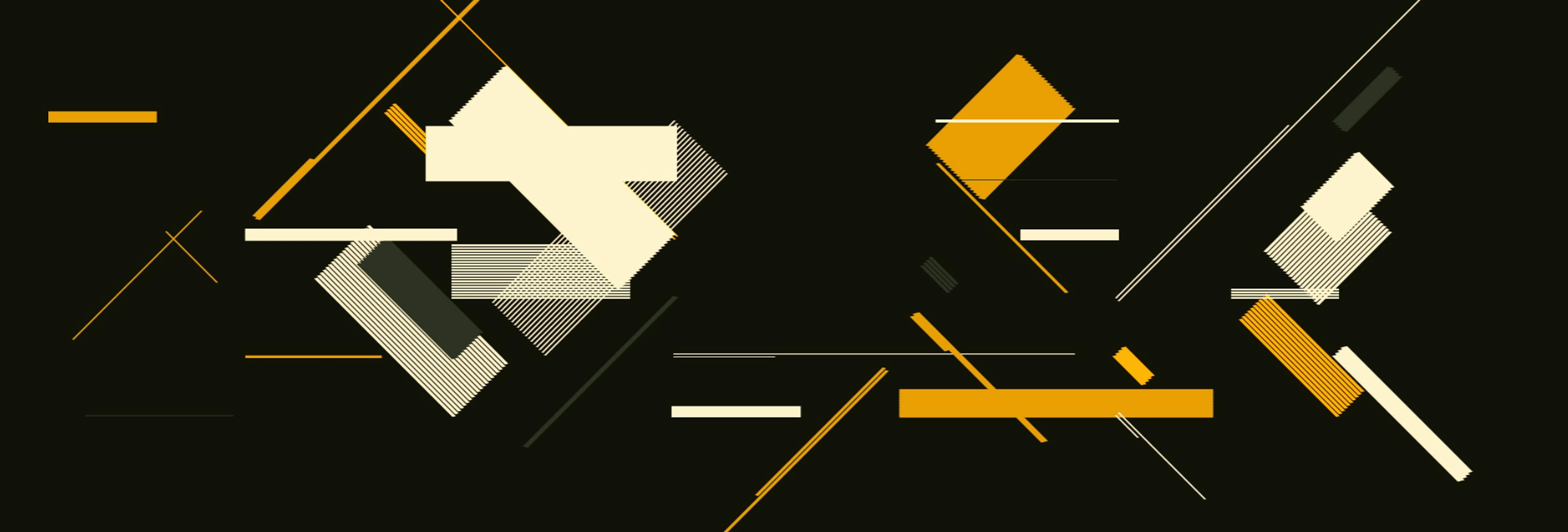

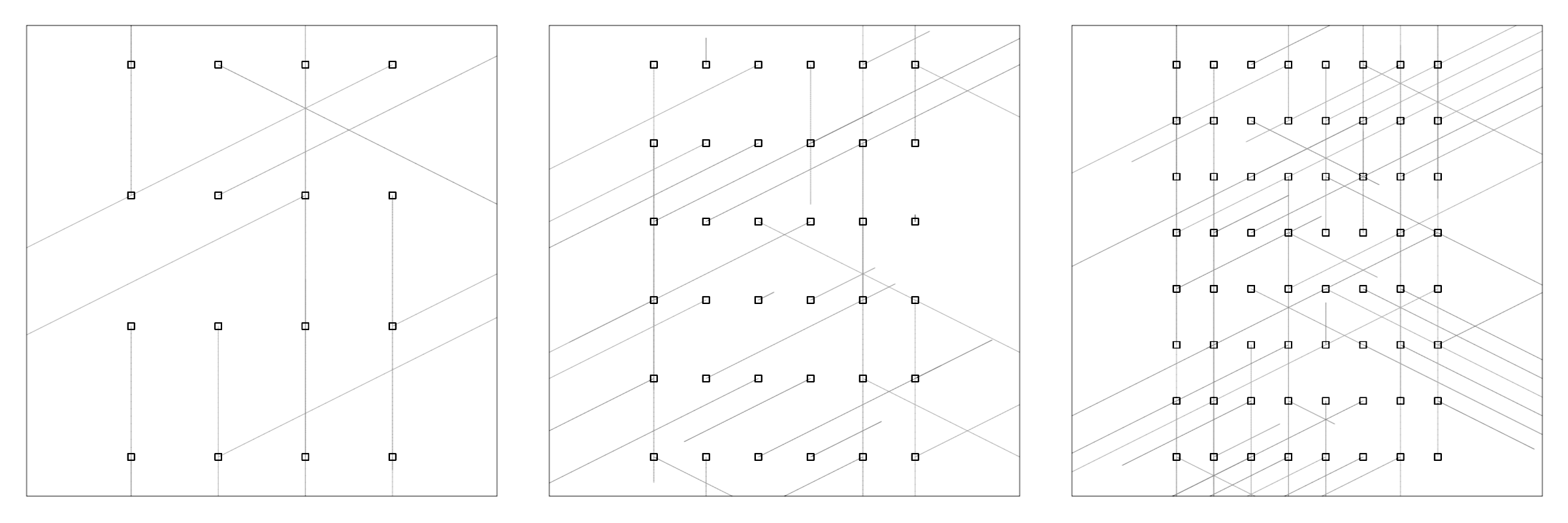

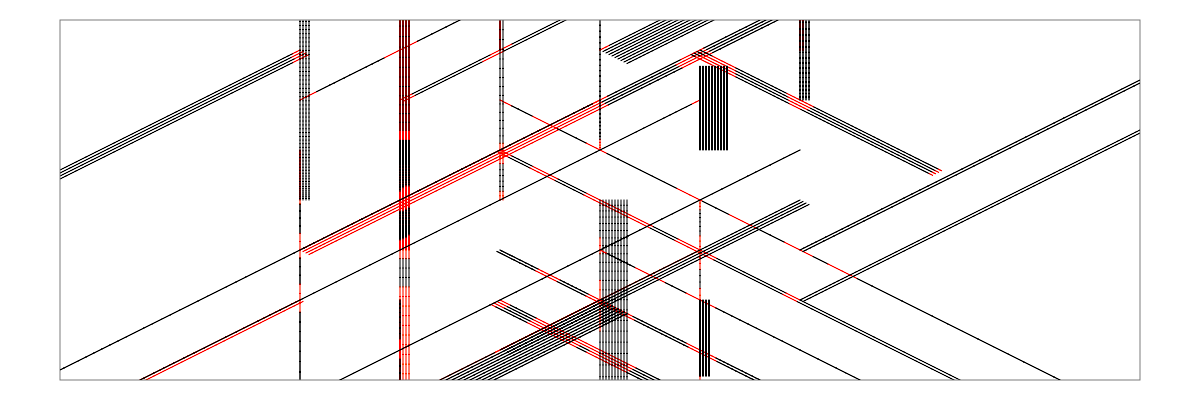

Autocolliders is a series of artworks, that consist of straight lines with an ordered distribution in a finite two dimensional space. They rhyme upon themselves, they repeat, they rotate, some of them never meet, some of them intersect occasionally, disrupting each others trajectories. Upon constructing a scene, the empty space is divided along a grid with different resolutions.

At each given point, a line is growing out into a direction of six predefined angles with varying lengths. These vectors are then repeated and transformed occasionally into perpendicular directions of their orientations, creating a dense, ordered structure of overlapping layers. Like flipping coins or rolling dice, the provided instructions can lead to interesting visual choreographies, depending on how their random chance operations are distributed.

These basic rules act as the grounding for the image architecture. Interesting twists and complexities start to emerge within the system when collision avoidance is added to the segments. Empty spaces, irregular zones and glitches in the formerly continuous shapes cause sudden disruptions that shift the focus of attention from the foreground into emptiness, leaving space for imaginative territories. The colours of the scene are selected from highly constrained, manually curated palettes that were created with an intention on cleanness, simplicity and convenience.

Influenced by patterns and systems that can be found in abstract textile pieces from the Bauhaus, they also inherit from the soft minimal worlds of Japanese lithographs and other oriental prints, among raw, reduced and schematic color variations of electronic imagery and computer aesthetics.

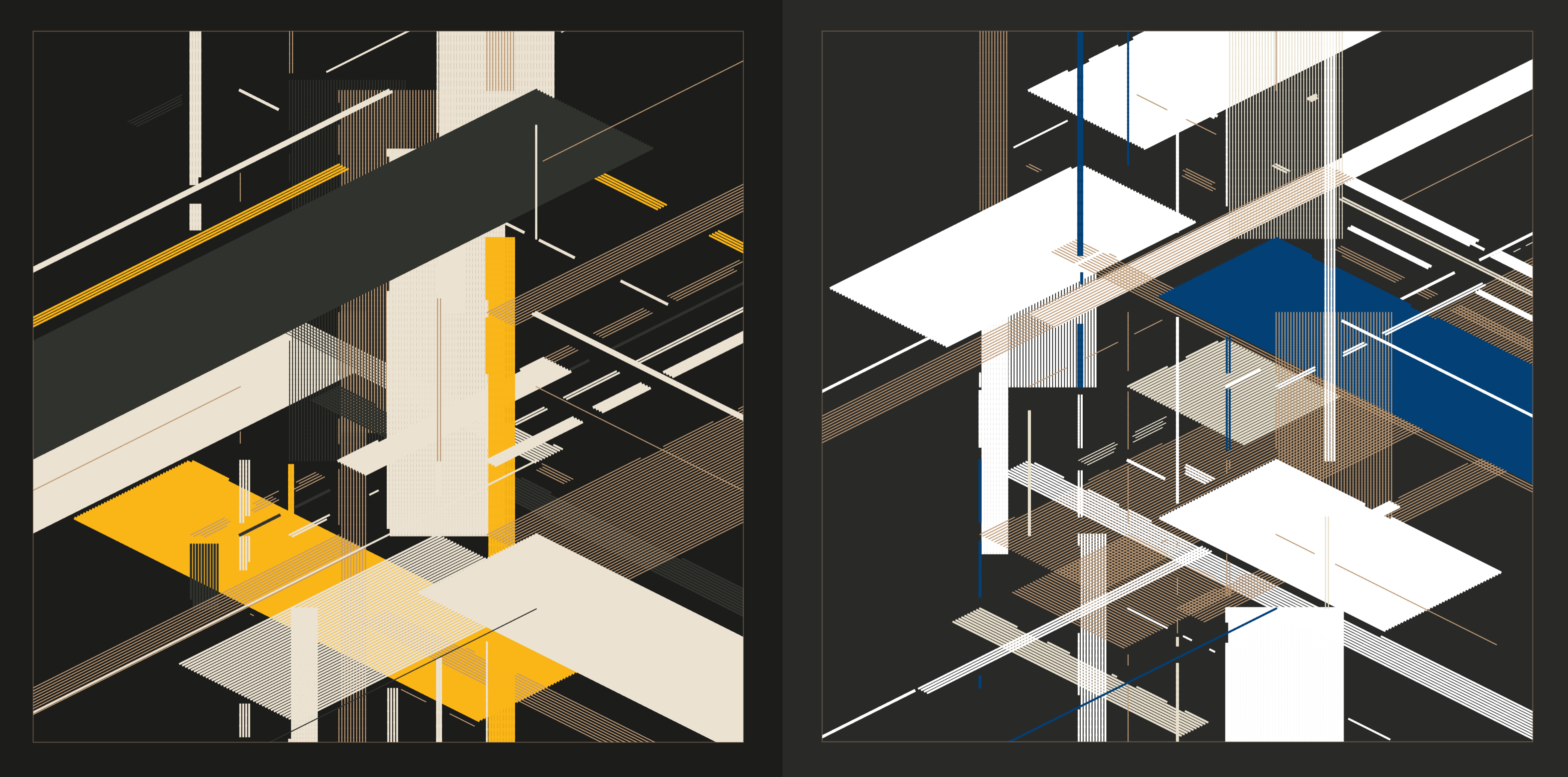

The artwork implements two features that are generated and stored as parts of the token metadata. Each piece consists of a unique combination of Environment and Palette. While environmental features are selected from a set of words that describe spatial properties (sparse, frequent, dense, berserk, instinct), there are ten predefined color palettes which are composed from names of different gems and minerals that have vivid and colorful properties by their nature (pearl, onyx, opal, azurite, blizzard, tourmaline, garnet, tanzanite, iolite, quartz). As an example, the following two iterations have both different environmental densities and different palette components:

In physics, colliders are used as a research tool by accelerating particles to very high kinetic energy and letting them impact other particles. Analysis of the byproducts of these collisions gives scientists good evidence of the structure of the subatomic world and the laws of nature governing it. These may become apparent only at high energies and for tiny periods of time, and therefore may be hard or impossible to study in other ways. These principles can easily resemble the ways how human attention and memory work, where caching an idea, a feeling or other intuitive processes are also very challenging for a constantly scattered mind. Cognitive research and several ancient philosophies refer to these restless chatterings as manifestations of the monkey mind. There have been several historical techniques developed in order to navigate these mental spaces: memory palaces, tactile infrastructures, mnemonic devices, method of loci, search trees and permutation algorithms are just a few of the myriad ways we humans shape and access the multilayered, omnidirectional scope of our cognitive environment.

Technical mediation

There are fundamental differences between traditional generative art practice and the one that uses blockchain as a medium. The latter led to the practice of long-form algorithmic art, that uses deterministic strategies (seeds, derived from a transaction hash and other cryptographic functions), combined with immutable properties of the underlying technologies. Speaking of the nature of an algorithm, with long-term generative art, collectors and viewers become extremely familiar with the output space of a program. Autocolliders is somewhere in-between short-form and long-form properties, since the features it implements are not derived directly from transaction parameters, as they are provided as an extra layer of metadata for the collection. However, throughout working with the code, I gradually developed a conceptual scope of what exactly the program is capable of generating, and how likely it is to generate one output versus another, so I kept that agency in mind during the whole workflow with the piece. This part of the process was a bit different compared to my pieces on fx-hash, since drops on zora work differently: here, features and token metadata need to be configured during the initial deployment of the whole series instead of being generated for every token upon each mint. All iterations within the series were created exactly from the same code with no manual intervention. Features are derived from the actualization of the pieces when running and saving them as scalable vector graphic formats. The feature parameters are saved into the names of the output files along unique timestamps:

fileName = `autocoll-${densityNames[cNum]}-${paletteNames[cPalette]}-${Date.now()}`

save(`${cFileName}.svg`)Where densityNames are the pre-defined spatial properties, cNum is the actual index to use from them, paletteNames are the predefined color names and cPalette is the actual selected colour index. The results are then loaded with another script that reads each filename and generates token metadata based on those parameters for all the artworks into one csv file, linking metadata to the actual svg files:

let fs = require('fs')

let files = fs.readdirSync('./svgs')

for (let i=0; i<files.length; i++) {

let words = ["poetry", "permutation", "notation"]

let rnd = words[Math.floor(Math.random() * 3)]

let cToken = `Autocollider #${i+1},"Line segment ${rnd}, CC BY-SA 4.0","${files[i]}","${files[i].split("-")[1]}","${files[i].split("-")[2]}",`

fs.appendFile('_tokens.csv', "\n"+cToken, function (err) {

if (err) throw err

})

}

Preparing the body of the artwork using custom code snippets and modular algorithmic extensions to be deployed on the blockchain resonates with Bruno Latour’s idea of “technical mediation”, which stipulates that agency is distributed among various actors. The medium defines the way one will interact with the creative process, and this will result in new forms of cognitive modalities and aesthetic outcomes. He describes the example of how a person with a gun — in themselves two separate things — combine to form a new thing. Latour highlights how relationships between humans and technologies create complex, hybrid constructs that are often inseparable.

Complex and hybrid structures run throughout generative art also, being short-form, long-form, or anything in-between, producing new, unique hybrid constructs: unlike with copies of discrete artworks, both digital and physical, these generative artworks do not have a prototypical image, but a possibility space constructed of the derivations of their underlying algorithms.

Less Lines

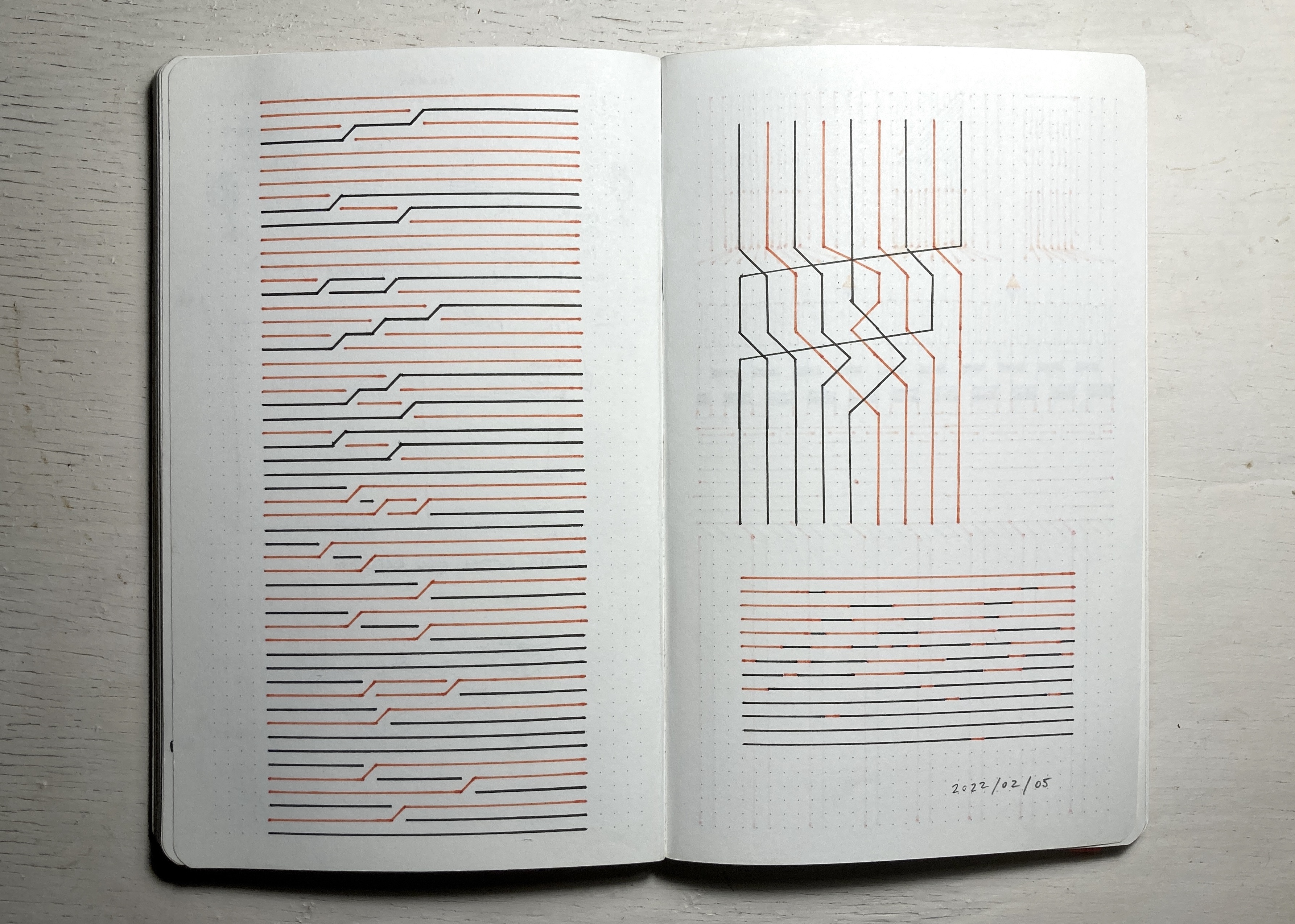

While working with static, aleatoric compositions is essential within my workflow, temporal aspects are also important areas of my research. The relationship between movements, image structures and sounds are still open ended cultural territories with many possibilities for further exploration. Apart of classical animations, clips and video games, new temporality of network based media introduces many conceptual layers for sonic tinkering.

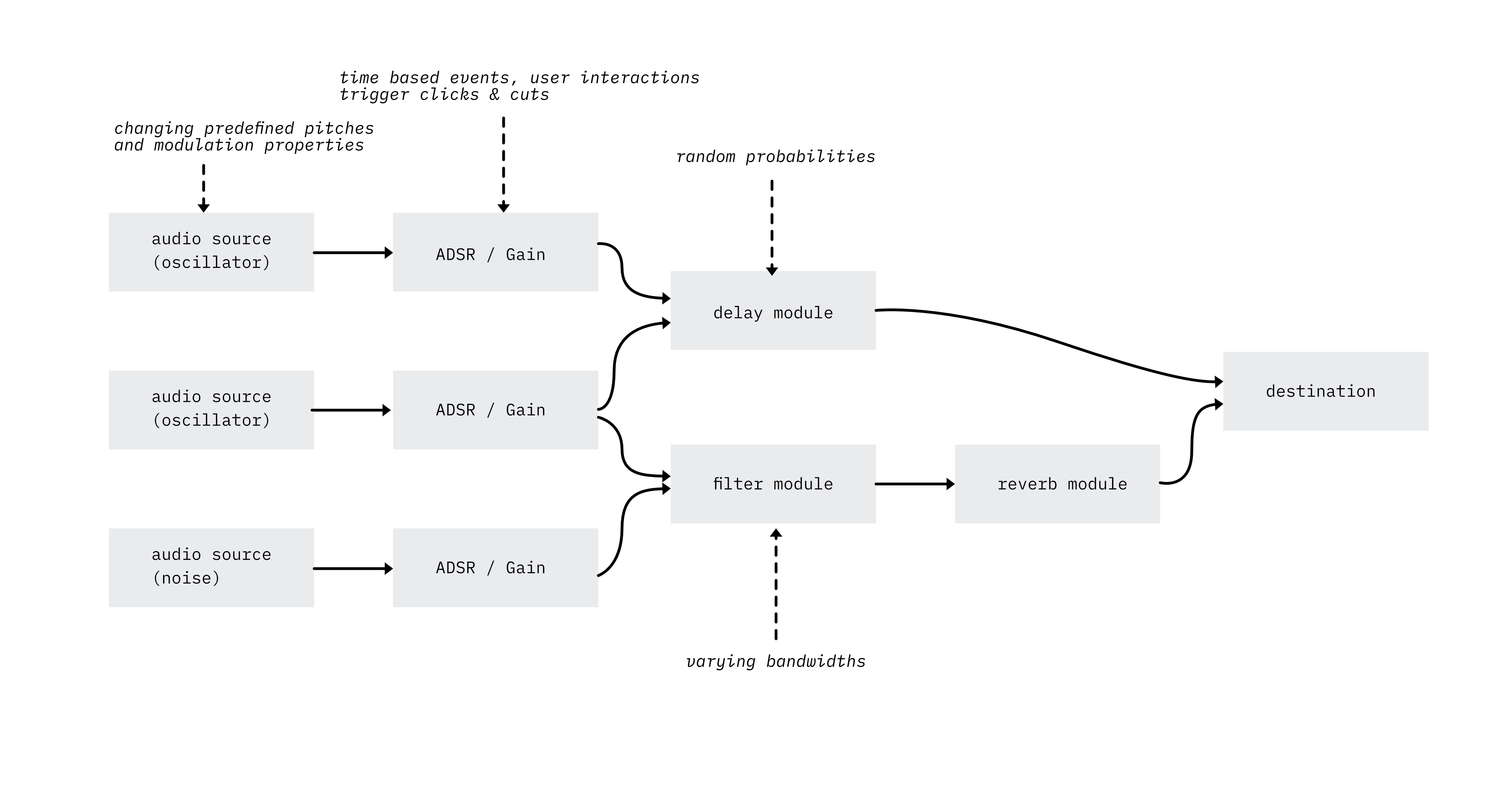

Within the physical world, rhythmical movements, events, interactions all cast sounds into their environments as byproducts of their moving energies. As a creative process, the act of sonification builds on these properties and there are many ways to express emotions and semantic relations by converting events and quantitative data into sounds. Within my previous works, I often used realtime data feeds, user interactions or simple mathematical algorithms as driving forces for producing sounds, creating different sonic textures and harmonies. Coming from a visual programming background, I like to think of sound as continuous flow of entities through different digital signal processing (DSP) components, where synthesized sources are traveling over different filters and effect components before hitting their destination, aka the speakers.

I usually develop my own WebAudio components, that consist of generators and modifiers that can be chained together easily. Just like in any dynamic, cybernetic system, environmental events and temporal shifts both affect the behavior and outcomes of the audio engine. If a state changes, an ADSR envelope might be triggered, a filter coefficient or a delay time might be set to a different range as time passes and new events occur. This method of working with sounds is very similar to building auditory displays or musical atmospheres for games, where game states are affecting the outcome. The intention here is to bypass regular musical structures and narrative elements and let the algorithmic choreographies unfold by their own internal rules without any manual intervention. Somewhat similar to working with visual math algorithms or data visualization systems.

Within Less Lines, the elements are moving slowly, formulating a quasi-still image, with no progress in time, a perpetual resonance stuck in a very slow, infinite loop. I like to think of these subliminal movements as groundings for the audio composition, formulating simple sine-wave oscillations in the audible domain, ending up as continuous ambient drone harmonies. This timeless, horizontal trajectory is then disrupted with sudden glitches and bursts, within both the sounds and the visuals, in forms of disappearing and reappearing elements.

These are the collisions of the monkey mind, the artifacts that disrupt the calmness of the clear presence, similar to widely known mental states that cloud the mind and manifest in unwholesome actions within our everyday lives and experience of our environment. The system we observe is in a continuous struggle of eliminating these artifacts, trying to clear the space, to make less lines and leave less obstacles within the scene.

Details

Both collections are built with custom, standard ERC-721 minting contracts, with optional extensibility frameworks, which means that these contracts allow other smart contracts to interact with themselves, with different permission models. This method involves cultural composability, which is based on the idea that creators should own their digital goods, via true digital ownership and creative sovereignty. As an experiment, a few editions of Less Lines are being listed on Foundation for enhancing visibility, while other editions are still available to collect on Manifold Gallery. Autocolliders is live and available to mint on Zora.

🥝 curated @kiwi “research that focuses on the visual language of lines and notation systems, covering concepts from theoretical views through manual sketching and blockchain based generative media” @stc https://paragraph.xyz/@stc/line-segment-notations?referrer=0xC304Eef1023e0b6e644f8ED8f8c629fD0973c52d

have a 🥝 https://news.kiwistand.com/welcome?referral=0xC304Eef1023e0b6e644f8ED8f8c629fD0973c52d

Agoston Nagy @stc has posted an intriguing text about visual systems, rule-based drawings and generative art. It deserves sharing. /gen-art https://paragraph.xyz/@stc/line-segment-notations

thinking through lines https://paragraph.xyz/@stc/line-segment-notations

Impressive read. Thank you for posting! 200 $DEGEN

thank you Marius!

Tagging to read this later & looking forward to it! 1000 $DEGEN

Thank you, have a pleasant reading time!

You’re my new favorite. Mondrian x blockchain

Autocollider #22 minted by doilyworks.eth https://zora.co/collect/0xaaf51a410822bf15d7709c09a71b6e29a0b41bef/22 Read more on the collection at https://paragraph.xyz/@stc/line-segment-notations